Chatbots Online Tutorials

A chatbot (originally chatterbot) is a software application or web interface designed to have textual or spoken conversations. Modern chatbots are typically online and use generative artificial intelligence systems that are capable of maintaining a conversation with a user in natural language and simulating the way a human would behave as a conversational partner. Such chatbots often use deep learning and natural language processing, but simpler chatbots have existed for decades.

Although chatbots have existed since the late 1960s, the field gained widespread attention in the early 2020s due to the popularity of OpenAI's ChatGPT, followed by alternatives such as Microsoft's Copilot, DeepSeek and Google's Gemini. Such examples reflect the recent practice of basing such products upon broad foundational large language models, such as GPT-4 or the Gemini language model, that get fine-tuned so as to target specific tasks or applications (i.e., simulating human conversation, in the case of chatbots). Chatbots can also be designed or customized to further target even more specific situations and/or particular subject-matter domains.

A major area where chatbots have long been used is in customer service and support, with various sorts of virtual assistants. Companies spanning a wide range of industries have begun using the latest generative artificial intelligence technologies to power more advanced developments in such areas.

History

editTuring test

editIn 1950, Alan Turing's famous article "Computing Machinery and Intelligence" was published, which proposed what is now called the Turing test as a criterion of intelligence. This criterion depends on the ability of a computer program to impersonate a human in a real-time written conversation with a human judge to the extent that the judge is unable to distinguish reliably—on the basis of the conversational content alone—between the program and a real human.

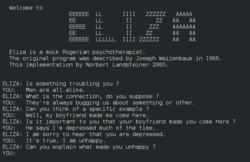

Eliza

editThe notoriety of Turing's proposed test stimulated great interest in Joseph Weizenbaum's program ELIZA, published in 1966, which seemed to be able to fool users into believing that they were conversing with a real human. However Weizenbaum himself did not claim that ELIZA was genuinely intelligent, and the introduction to his paper presented it more as a debunking exercise:

In artificial intelligence, machines are made to behave in wondrous ways, often sufficient to dazzle even the most experienced observer. But once a particular program is unmasked, once its inner workings are explained, its magic crumbles away; it stands revealed as a mere collection of procedures. The observer says to himself "I could have written that". With that thought, he moves the program in question from the shelf marked "intelligent", to that reserved for curios. The object of this paper is to cause just such a re-evaluation of the program about to be "explained". Few programs ever needed it more.

ELIZA's key method of operation involves the recognition of clue words or phrases in the input, and the output of the corresponding pre-prepared or pre-programmed responses that can move the conversation forward in an apparently meaningful way (e.g. by responding to any input that contains the word 'MOTHER' with 'TELL ME MORE ABOUT YOUR FAMILY'). Thus an illusion of understanding is generated, even though the processing involved has been merely superficial. ELIZA showed that such an illusion is surprisingly easy to generate because human judges are ready to give the benefit of the doubt when conversational responses are capable of being interpreted as "intelligent".

Interface designers have come to appreciate that humans' readiness to interpret computer output as genuinely conversational—even when it is actually based on rather simple pattern-matching—can be exploited for useful purposes. Most people prefer to engage with programs that are human-like, and this gives chatbot-style techniques a potentially useful role in interactive systems that need to elicit information from users, as long as that information is relatively straightforward and falls into predictable categories. Thus, for example, online help systems can usefully employ chatbot techniques to identify the area of help that users require, potentially providing a "friendlier" interface than a more formal search or menu system. This sort of usage holds the prospect of moving chatbot technology from Weizenbaum's "shelf ... reserved for curios" to that marked "genuinely useful computational methods".

Early chatbots

editAmong the most notable early chatbots are ELIZA (1966) and PARRY (1972). More recent notable programs include A.L.I.C.E., Jabberwacky and D.U.D.E (Agence Nationale de la Recherche and CNRS 2006). While ELIZA and PARRY were used exclusively to simulate typed conversation, many chatbots now include other functional features, such as games and web searching abilities. In 1984, a book called The Policeman's Beard is Half Constructed was published, allegedly written by the chatbot Racter (though the program as released would not have been capable of doing so).

From 1978 to some time after 1983, the CYRUS project led by Janet Kolodner constructed a chatbot simulating Cyrus Vance (57th United States Secretary of State). It used case-based reasoning, and updated its database daily by parsing wire news from United Press International. The program was unable to process the news items subsequent to the surprise resignation of Cyrus Vance in April 1980, and the team constructed another chatbot simulating his successor, Edmund Muskie.

One pertinent field of AI research is natural-language processing. Usually, weak AI fields employ specialized software or programming languages created specifically for the narrow function required. For example, A.L.I.C.E. uses a markup language called AIML, which is specific to its function as a conversational agent, and has since been adopted by various other developers of, so-called, Alicebots. Nevertheless, A.L.I.C.E. is still purely based on pattern matching techniques without any reasoning capabilities, the same technique ELIZA was using back in 1966. This is not strong AI, which would require sapience and logical reasoning abilities.

Jabberwacky learns new responses and context based on real-time user interactions, rather than being driven from a static database. Some more recent chatbots also combine real-time learning with evolutionary algorithms that optimize their ability to communicate based on each conversation held.

Chatbot competitions focus on the Turing test or more specific goals. Two such annual contests are the Loebner Prize and The Chatterbox Challenge (the latter has been offline since 2015, however, materials can still be found from web archives).

DBpedia created a chatbot during the GSoC of 2017. It can communicate through Facebook Messenger.

Modern chatbots based on large language models

editModern chatbots like ChatGPT are often based on large language models called generative pre-trained transformers (GPT). They are based on a deep learning architecture called the transformer, which contains artificial neural networks. They learn how to generate text by being trained on a large text corpus, which provides a solid foundation for the model to perform well on downstream tasks with limited amounts of task-specific data. Despite criticism of its accuracy and tendency to "hallucinate"—that is, to confidently output false information and even cite non-existent sources—ChatGPT has gained attention for its detailed responses and historical knowledge. Another example is BioGPT, developed by Microsoft, which focuses on answering biomedical questions. In November 2023, Amazon announced a new chatbot, called Q, for people to use at work.

Application

editThis section needs to be updated. (December 2024) |

Messaging apps

editMany companies' chatbots run on messaging apps or simply via SMS. They are used for B2C customer service, sales and marketing.

In 2016, Facebook Messenger allowed developers to place chatbots on their platform. There were 30,000 bots created for Messenger in the first six months, rising to 100,000 by September 2017.

Since September 2017, this has also been as part of a pilot program on WhatsApp. Airlines KLM and Aeroméxico both announced their participation in the testing; both airlines had previously launched customer services on the Facebook Messenger platform.

The bots usually appear as one of the user's contacts, but can sometimes act as participants in a group chat.

Many banks, insurers, media companies, e-commerce companies, airlines, hotel chains, retailers, health care providers, government entities, and restaurant chains have used chatbots to answer simple questions, increase customer engagement, for promotion, and to offer additional ways to order from them. Chatbots are also used in market research to collect short survey responses.

A 2017 study showed 4% of companies used chatbots. In a 2016 study, 80% of businesses said they intended to have one by 2020.

As part of company apps and websites

editPrevious generations of chatbots were present on company websites, e.g. Ask Jenn from Alaska Airlines which debuted in 2008 or Expedia's virtual customer service agent which launched in 2011. The newer generation of chatbots includes IBM Watson-powered "Rocky", introduced in February 2017 by the New York City-based e-commerce company Rare Carat to provide information to prospective diamond buyers.

Chatbot sequences

editUsed by marketers to script sequences of messages, very similar to an autoresponder sequence. Such sequences can be triggered by user opt-in or the use of keywords within user interactions. After a trigger occurs a sequence of messages is delivered until the next anticipated user response. Each user response is used in the decision tree to help the chatbot navigate the response sequences to deliver the correct response message.

Company internal platforms

editCompanies have used chatbots for customer support, human resources, or in Internet-of-Things (IoT) projects. Overstock.com, for one, has reportedly launched a chatbot named Mila to attempt to automate certain processes when customer service employees request sick leave. Other large companies such as Lloyds Banking Group, Royal Bank of Scotland, Renault and Citroën are now using chatbots instead of call centres with humans to provide a first point of contact. In large companies, like in hospitals and aviation organizations, chatbots are also used to share information within organizations, and to assist and replace service desks.

Customer service

editChatbots have been proposed as a replacement for customer service departments.

Deep learning techniques can be incorporated into chatbot applications to allow them to map conversations between users and customer service agents, especially in social media.

In 2019, Gartner predicted that by 2021, 15% of all customer service interactions globally will be handled completely by AI. A study by Juniper Research in 2019 estimates retail sales resulting from chatbot-based interactions will reach $112 billion by 2023.

In 2016, Russia-based Tochka Bank launched a chatbot on Facebook for a range of financial services, including a possibility of making payments. In July 2016, Barclays Africa also launched a Facebook chatbot.

In 2023, US-based National Eating Disorders Association replaced its human helpline staff with a chatbot but had to take it offline after users reported receiving harmful advice from it.

Healthcare

editChatbots are also appearing in the healthcare industry. A study suggested that physicians in the United States believed that chatbots would be most beneficial for scheduling doctor appointments, locating health clinics, or providing medication information.

ChatGPT is able to answer user queries related to health promotion and disease prevention such as screening and vaccination. WhatsApp has teamed up with the World Health Organization (WHO) to make a chatbot service that answers users' questions on COVID-19.

In 2020, the Government of India launched a chatbot called MyGov Corona Helpdesk, that worked through WhatsApp and helped people access information about the Coronavirus (COVID-19) pandemic.

Certain patient groups are still reluctant to use chatbots. A mixed-methods 2019 study showed that people are still hesitant to use chatbots for their healthcare due to poor understanding of the technological complexity, the lack of empathy, and concerns about cyber-security. The analysis showed that while 6% had heard of a health chatbot and 3% had experience of using it, 67% perceived themselves as likely to use one within 12 months. The majority of participants would use a health chatbot for seeking general health information (78%), booking a medical appointment (78%), and looking for local health services (80%). However, a health chatbot was perceived as less suitable for seeking results of medical tests and seeking specialist advice such as sexual health.

The analysis of attitudinal variables showed that most participants reported their preference for discussing their health with doctors (73%) and having access to reliable and accurate health information (93%). While 80% were curious about new technologies that could improve their health, 66% reported only seeking a doctor when experiencing a health problem and 65% thought that a chatbot was a good idea. 30% reported dislike about talking to computers, 41% felt it would be strange to discuss health matters with a chatbot and about half were unsure if they could trust the advice given by a chatbot. Therefore, perceived trustworthiness, individual attitudes towards bots, and dislike for talking to computers are the main barriers to health chatbots.

Politics

editIn New Zealand, the chatbot SAM – short for Semantic Analysis Machine[59] – has been developed by Nick Gerritsen of Touchtech.[60] It is designed to share its political thoughts, for example on topics such as climate change, healthcare and education, etc. It talks to people through Facebook Messenger.[61][62][63][64]

In 2022, the chatbot "Leader Lars" or "Leder Lars" was nominated for The Synthetic Party to run in the Danish parliamentary election,[65] and was built by the artist collective Computer Lars.[66] Leader Lars differed from earlier virtual politicians by leading a political party and by not pretending to be an objective candidate.[67] This chatbot engaged in critical discussions on politics with users from around the world.[68]

In India, the state government has launched a chatbot for its Aaple Sarkar platform,[69] which provides conversational access to information regarding public services managed.[70][71]

Toys

editChatbots have also been incorporated into devices not primarily meant for computing, such as toys.[72]

Hello Barbie is an Internet-connected version of the doll that uses a chatbot provided by the company ToyTalk,[73] which previously used the chatbot for a range of smartphone-based characters for children.[74] These characters' behaviors are constrained by a set of rules that in effect emulate a particular character and produce a storyline.[75]

The My Friend Cayla doll was marketed as a line of 18-inch (46 cm) dolls which uses speech recognition technology in conjunction with an Android or iOS mobile app to recognize the child's speech and have a conversation. Like the Hello Barbie doll, it attracted controversy due to vulnerabilities with the doll's Bluetooth stack and its use of data collected from the child's speech.

IBM's Watson computer has been used as the basis for chatbot-based educational toys for companies such as CogniToys,[72] intended to interact with children for educational purposes.[76]

Malicious use

editMalicious chatbots are frequently used to fill chat rooms with spam and advertisements by mimicking human behavior and conversations or to entice people into revealing personal information, such as bank account numbers. They were commonly found on Yahoo! Messenger, Windows Live Messenger, AOL Instant Messenger and other instant messaging protocols. There has also been a published report of a chatbot used in a fake personal ad on a dating service's website.[77]

Tay, an AI chatbot designed to learn from previous interaction, caused major controversy due to it being targeted by internet trolls on Twitter. Soon after its launch, the bot was exploited, and with its "repeat after me" capability, it started releasing racist, sexist, and controversial responses to Twitter users.[78] This suggests that although the bot learned effectively from experience, adequate protection was not put in place to prevent misuse.[79]

If a text-sending algorithm can pass itself off as a human instead of a chatbot, its message would be more credible. Therefore, human-seeming chatbots with well-crafted online identities could start scattering fake news that seems plausible, for instance making false claims during an election. With enough chatbots, it might be even possible to achieve artificial social proof.[80][81]

Data security

editData security is one of the major concerns of chatbot technologies. Security threats and system vulnerabilities are weaknesses that are often exploited by malicious users. Storage of user data and past communication, that is highly valuable for training and development of chatbots, can also give rise to security threats.[82] Chatbots operating on third-party networks may be subject to various security issues if owners of the third-party applications have policies regarding user data that differ from those of the chatbot.[82] Security threats can be reduced or prevented by incorporating protective mechanisms. User authentication, chat End-to-end encryption, and self-destructing messages are some effective solutions to resist potential security threats.[82]

Mental Health

editChatbots have shown to be an emerging technology used in the field of mental health. Its usage may encourage the users to seek advice on matters of mental health as a means to avoid the stigmatization that may come from sharing such matters with other people. [83] This is because chatbots can give a sense of privacy and anonymity when sharing sensitive information, as well as providing a space that allows for the user to be free of judgment. [83] An example of this can be seen in a study which found that with social media and AI chatbots both being possible outlets to express mental health online, users were more willing to share their darker and more depressive emotions to the chatbot. [83]

Findings prove that chatbots have great potential in scenarios in which it is difficult for users to reach out to family or friends for support. [83] It has been noted that it demonstrates the ability to give young people "various types of social support such as appraisal, informational, emotional, and instrumental support".[83] Studies have found that chatbots are able to assist users in managing things such as depression and anxiety. [83] Some examples of chatbots that serve this function are "Woebot, Wysa, Vivibot, and Tess". [83]

Evidence indicates that when mental health chatbots interact with users, they tend to follow certain conversation flows.[84] These being guided conversation, semi guided conversation, and open ended conversation.[84] The most popular, guided conversation, “only allows the users to communicate with the chatbot with predefined responses from the chatbot. It does not allow any form of open input from the users”.[84] It has also been noted in a study looking at the methods employed by various mental health chatbots, that most of them employed a form of cognitive behavior therapy with the user.[84]

Research has identified that there are potential barriers to entry that come with the usage of chatbots for mental health.[85] There exist ongoing privacy concerns with sharing user’s personal data in chat logs with chatbots.[85] In addition to that, there exists a lack of willingness from those in lower socioeconomic statuses to adopt interactions with chatbots as a meaningful way to improve upon mental health.[85] Though chatbots may be capable of detecting simple human emotions in interactions with users, they are incapable of replicating the level of empathy that human therapists do.[85] Due to the nature of chatbots being language learning models trained on numerous datasets, the issue of Algorithmic Bias exists.[85] Chatbots with built in biases from their training can have them brought out against individuals of certain backgrounds and may result incorrect information being conveyed.[85]

There is a lack of research about how exactly these interactions help with a user’s real life.[84] Additionally, there are concerns regarding the safety of users when interacting with such chatbots.[84] When improvements and advancements are made to such technologies, how that may affect humans is not a priority.[84] It is possible that this can lead to "unintended negative consequences, such as biases, inadequate and failed responses, and privacy issues".[84]

A risk that may come about because of the usage of chatbots to deal with mental health is increased isolation, as well as a lack of support in times of crisis.[84] Another notable risk is a general lack of a strong understanding of mental health.[84] Studies have indicated that mental health oriented chatbots have been prone to recommending users medical solutions and to rely upon themselves heavily.[84]

Limitations of chatbots

editThis section needs expansion. You can help by adding to it. (December 2024) |

Chatbots have difficulty managing non-linear conversations that must go back and forth on a topic with a user.[86]

Large language models are more versatile, but require a large amount of conversational data to train. These modeles generate new responses word by word based on user input, are usually trained on a large dataset of natural-language phrases.[3] They sometimes provide plausible-sounding but incorrect or nonsensical answers. They can make up names, dates, historical events, and even simple math problems.[87] When large language models produce coherent-sounding but inaccurate or fabricated content, this is referred to as "hallucinations". When humans use and apply chatbot content contaminated with hallucinations, this results in "botshit".[88] Given the increasing adoption and use of chatbots for generating content, there are concerns that this technology will significantly reduce the cost it takes humans to generate misinformation.[89]

Impact on jobs

editChatbots and technology in general used to automate repetitive tasks. But advanced chatbots like ChatGPT are also targeting high-paying, creative, and knowledge-based jobs, raising concerns about workforce disruption and quality trade-offs in favor of cost-cutting.[90]

Chatbots are increasingly used by small and medium enterprises, to handle customer interactions efficiently, reducing reliance on large call centers and lowering operational costs.[91]

Prompt engineering, the task of designing and refining prompts (inputs) leading to desired AI-generated responses has quickly gained significant demand with the advent of large language models,[92] although the viability of this job is questioned due to new techniques for automating prompt engineering.[93]

Impact on the environment

editGenerative AI uses a high amount of electric power. Due to reliance on fossil fuels in its generation, this increases air pollution, water pollution, and greenhouse gas emissions. In 2023, a question to ChatGPT consumed on average 10 times as much energy as a Google search.[94] Data centres in general, and those used for AI tasks specifically, consume significant amounts of water for cooling.[95][96]

See also

edit- Applications of artificial intelligence

- Artificial human companion

- Artificial intelligence and elections

- Autonomous agent

- Conversational user interface

- Dead Internet theory

- Friendly artificial intelligence

- Hybrid intelligent system

- Intelligent agent

- Internet bot

- List of chatbots

- Multi-agent system

- Social bot

- Software agent

- Software bot

- Stochastic parrot

- Technological unemployment

- Twitterbot

References

editFurther reading

edit- Gertner, Jon. (2023) "Wikipedia's Moment of Truth: Can the online encyclopedia help teach A.I. chatbots to get their facts right — without destroying itself in the process?" New York Times Magazine (18 July 2023) online

- Searle, John (1980), "Minds, Brains and Programs", Behavioral and Brain Sciences, 3 (3): 417–457, doi:10.1017/S0140525X00005756, S2CID 55303721

- Shevat, Amir (2017). Designing bots: Creating conversational experiences (First ed.). Sebastopol, CA: O'Reilly Media. ISBN 978-1-4919-7482-7. OCLC 962125282.

- Vincent, James, "Horny Robot Baby Voice: James Vincent on AI chatbots", London Review of Books, vol. 46, no. 19 (10 October 2024), pp. 29–32. "[AI chatbot] programs are made possible by new technologies but rely on the timelelss human tendency to anthropomorphise." (p. 29.)

External links

edit- Media related to Chatbots at Wikimedia Commons

- Conversational bots at Wikibooks

- .Net Interview Questions And Answers

- All Interview Questions And Answers

- Android Common Tips Q and Ans

- AJAX Interview Questions And Answers

- Codeigniter Interview Questions And Answers

- Aptitude Interview Questions And Answers

- C Interview Questions And Answers

- CSS3 Interview Questions And Answers

- Data Structure Questions With Answers

- Database (DBMS) Questions With Answers

- Drupal Interview Questions And Answers

- Download Career Guide in doc file

- Dojo Interview Questions And Answers

- Design Pattern of all type

- EJB Interview Questions And Answers

- Header Function use in PHP and HTTP

- HR Interview Questions And Answers

- HTML5 Interview Questions And Answers

- Joomla Interview Questions And Answers

- Iphone Interview Questions And Answers

- MYSQL Interview Questions And Answers

- Java Interview Questions And Answers

- JQuery Interview Questions And Answers

- JSON Interview Questions And Answers

- JSP Interview Questions And Answers

- linux Commands and Interview Questions

- Moodle Tutorial for Developers

- Magento Common Tips Q and Ans

- Networking Hardware Questions with Answers

- Operating Systems Interview Questions

- OOPs Interview Questions and Answers

- PHP Interview Questions And Answers

- PHP Interview Questions 1500+

- PHP All Objective Questions Answers

- PHP Jobs for freshers and experienced

- Project Management Interview questions

- Regular Expressions Interview questions

- Spring Interview Questions Answers In Java

- Software Testing Interview Questions Answer

- Servlets Interview Questions And Answers

- Struts Interview Questions And Answers

- Threads Interview Questions And Answers

- US Jobs city Wise

- All Indian Company Name List

- Web Designing Interview Questions Answers

- XML Interview Questions Answers

- XML Interview Questions Answers

- .net Interview Questions And Answers

- Accountant Interview Questions And Answers

- Ado.net Interview Questions And Answers

- Adp Interview Questions And Answers

- Agile Methodology Interview Questions And Answers

- Android Interview Questions And Answers

- Apache Interview Questions And Answers

- Application Packaging Interview Questions And Answers

- Asp Interview Questions And Answers

- Backbone.js Interview Questions And Answers

- C Sharp Interview Questions And Answers

- C++ Interview Questions And Answers

- Cake Interview Questions And Answers

- Cakephp Interview Questions And Answers

- Can Protocol Interview Questions And Answers

- Catia V5 Interview Questions And Answers

- Ccna Interview Questions And Answers

- Checkpoint Firewall Interview Questions And Answers

- Control M Interview Questions And Answers

- Cpp Interview Questions And Answers

- Css Interview Questions And Answers

- DATA GRID Interview Questions And Answers

- Data Warehouse Interview Questions And Answers

- Data Structures Interview Questions And Answers

- Database Interview Questions And Answers

- Db2 Interview Questions And Answers

- Desktop Engineer Interview Questions And Answers

- Desktop Support Interview Questions And Answers

- DOJO Interview Questions And Answers

- Electrical Engineering Interview Questions And Answers

- Embedded Systems Interview Questions And Answers

- Hadoop Interview Questions And Answers

- Hibernate Interview Questions And Answers

- J2ee Interview Questions And Answers

- Javascript Interview Questions And Answers

- Joomla Interview Questions And Answers

- Jsp Interview Questions And Answers

- Less Interview Questions And Answers

- Linq Interview Questions And Answers

- Linux Interview Questions And Answers

- Matlab Interview Questions And Answers

- Mcitp Interview Questions And Answers

- Netbackup Interview Questions And Answers

- Node.js Interview Questions And Answers

- Oracle Interview Questions And Answers

- Perl Interview Questions And Answers

- Plsql Interview Questions And Answers

- Postgresql Interview Questions And Answers

- Python Interview Questions And Answers

- Qa Testing Interview Questions And Answers

- Qtp Interview Questions And Answers

- Sap Interview Questions And Answers

- Sass Interview Questions And Answers

- Selenium Interview Questions And Answers

- SEO Interview Questions And Answers

- Sharepoint Interview Questions And Answers

- Silverlight Interview Questions And Answers

- Sql Dba Interview Questions And Answers

- String Interview Questions And Answers

- Struts2 Interview Questions And Answers

- Stware Testing Interview Questions And Answers

- Swing Interview Questions And Answers

- Technical Support Interview Questions And Answers

- Telecom Billing Interview Questions And Answers

- Tomcat Interview Questions And Answers

- Troubleshooting Interview Questions And Answers

- Uml Interview Questions And Answers

- Us Visa Interview Questions And Answers

- Vb Interview Questions And Answers

- Visa Interview Questions And Answers

- Wcf Interview Questions And Answers

- Web Testing Interview Questions And Answers

- Windows Interview Questions And Answers

- Wordpress Interview Questions And Answers

- Wpf Interview Questions And Answers

- XQuery Interview Questions And Answers

- Yii Interview Questions And Answers

- Zend Framework 2 Interview Questions And Answers

- Zend Framework Interview Questions And Answers